What is AIOps?

AIOps (AI Operations) is the practice of running AI applications in production across their full lifecycle, from development and evaluation through deployment and monitoring. This encompasses LLM applications and agents, RAG systems, and traditional machine learning models.

Historically, "AIOps" referred to using AI for IT operations (automated log analysis, anomaly detection, incident management). Today, the term has evolved to also describe the operations for AI: the practices and platforms needed to build, deploy, and maintain AI applications in production. As organizations adopt LLMs, agents, and ML models at scale, AIOps provides a unified framework to manage all of these workloads.

MLflow is the most adopted open-source AIOps platform, providing a unified stack for both LLMOps (tracing, evaluation, prompt management, AI Gateway) and traditional ML operations (experiment tracking, model registry).

Why AIOps Matters

AI applications, whether LLM-powered agents or traditional ML models, introduce operational challenges that standard DevOps can't address:

Fragmented AI Tooling

Problem: Teams use separate tools for ML experiment tracking, LLM tracing, evaluation, and deployment, creating tool sprawl and fragmented workflows.

Solution: A unified AIOps platform manages all AI workloads (ML models, LLM apps, and agents) under a single framework.

Quality at Scale

Problem: AI outputs are non-deterministic and can degrade silently, making it hard to maintain quality across thousands of daily requests.

Solution: Automated evaluation with LLM judges and continuous monitoring catch regressions before they reach users.

Reproducibility

Problem: Without systematic tracking of parameters, data, models, and prompts, AI experiments and deployments become impossible to reproduce.

Solution: Experiment tracking and model registries capture every artifact, enabling full reproducibility across ML and LLM workloads.

Cost & Resource Management

Problem: AI workloads consume expensive compute (GPU training) and API costs (LLM tokens) that can spiral without visibility.

Solution: AIOps platforms track resource usage across all AI workloads, helping teams optimize costs and allocate resources effectively.

What is AIOps?

Modern AIOps is the operational discipline for all AI applications. It unifies the practices previously split across MLOps (for traditional ML) and LLMOps (for LLM applications) into a single framework that covers:

- LLMOps / AgentOps: Tracing, evaluation, prompt management, and production monitoring for LLM applications, agents, and RAG systems.

- ML Operations: Experiment tracking, model registry, and model deployment for traditional ML workflows using frameworks like scikit-learn, PyTorch, and TensorFlow.

- Cross-Cutting Concerns: Governance, audit trails, access control, cost tracking, and compliance that apply to all AI workloads regardless of type.

The key insight behind modern AIOps is that organizations rarely build with just one type of AI. Most teams have a mix of traditional ML models, LLM-powered features, and increasingly autonomous agents. AIOps provides a unified platform to operationalize all of these, preventing tool sprawl and ensuring consistent practices across all their AI work.

AIOps is closely related to AI observability (the monitoring and understanding subset) and LLMOps (the LLM-specific subset). AIOps is the broadest term, encompassing both and adding experiment management, model versioning, and unified deployment.

Key AIOps Capabilities

A comprehensive AIOps platform combines capabilities for both LLMOps/AgentOps and traditional ML workloads:

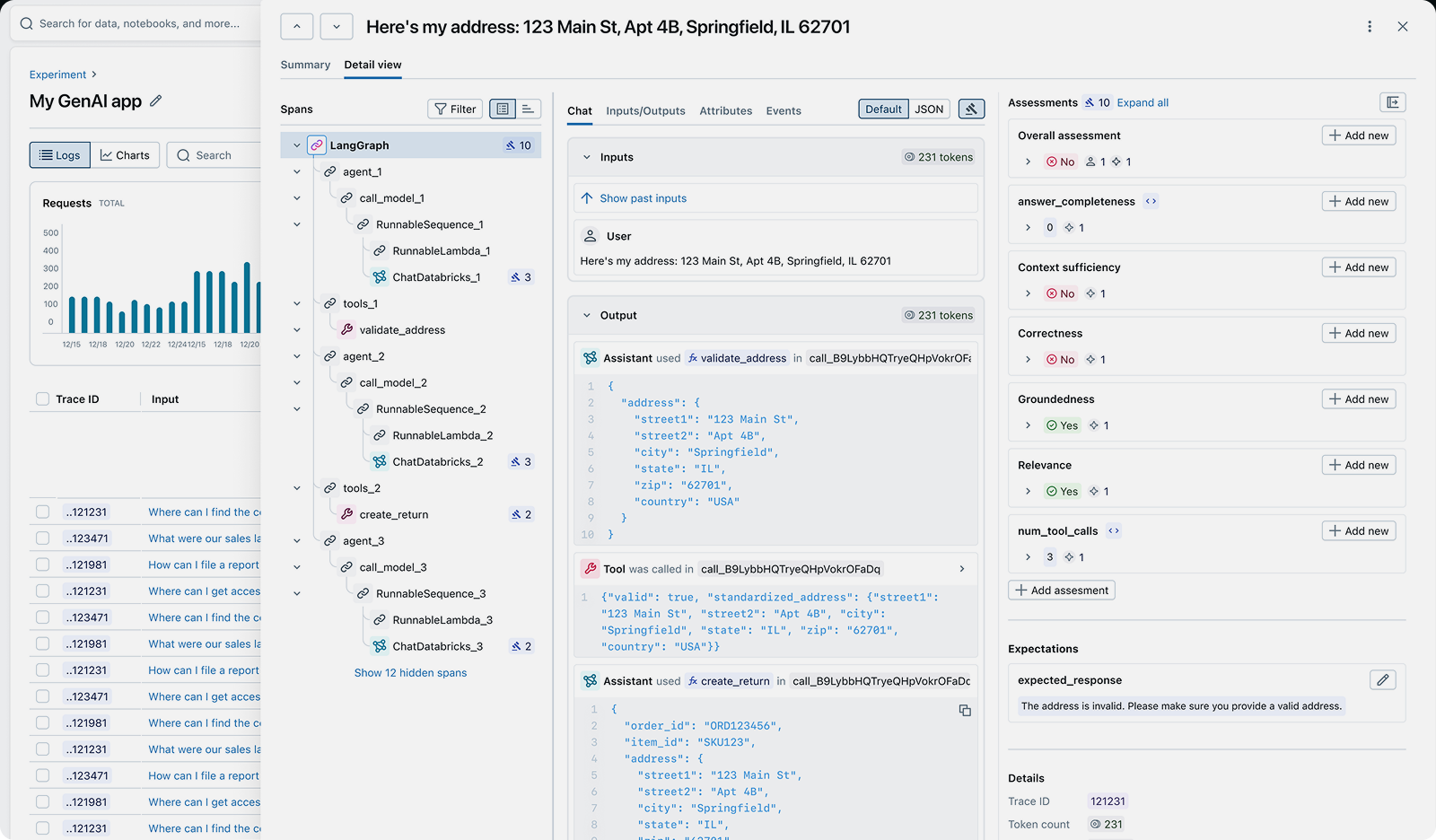

- Tracing: Record every step of LLM and agent execution (prompts, completions, tool calls, retrieval results, token usage, and latency) for debugging and monitoring.

- Evaluation: Assess AI output quality using LLM judges, custom scorers, and traditional metrics across all workload types.

- Experiment Tracking: Log parameters, metrics, and artifacts for ML experiments and LLM development, enabling comparison and reproducibility.

- Model Registry: Version, stage, and manage the lifecycle of ML models and LLM configurations in a centralized registry.

- Prompt Management: Version-control prompt templates, track production usage, and enable safe rollbacks for LLM applications.

- AI Gateway: Route requests across LLM providers through a single endpoint with unified authentication, rate limiting, and fallback routing.

- Production Monitoring: Track quality scores, error rates, costs, and drift over time to catch regressions across all AI workloads.

How to Implement AIOps

MLflow provides a complete, open-source AIOps platform. Here's how teams use MLflow across different AI workloads:

LLM Tracing

import mlflowfrom openai import OpenAI# Enable automatic tracing for OpenAImlflow.openai.autolog()# Every LLM call is now traced with full contextclient = OpenAI()response = client.chat.completions.create(model="gpt-4.1",messages=[{"role": "user", "content": "Summarize MLflow"}],)

Evaluation with LLM Judges

import mlflow.genai# Evaluate traced outputs with LLM judgesresults = mlflow.genai.evaluate(data=mlflow.search_traces(experiment_ids=["1"]),scorers=[mlflow.genai.scorers.Relevance(),mlflow.genai.scorers.Safety(),],)

ML Experiment Tracking

import mlflow# Track traditional ML experimentsmlflow.set_experiment("my-classification-model")with mlflow.start_run():mlflow.log_param("learning_rate", 0.01)mlflow.log_param("epochs", 100)# Train your model...mlflow.log_metric("accuracy", 0.95)mlflow.log_metric("f1_score", 0.93)# Log the model artifactmlflow.sklearn.log_model(model, "model")

MLflow provides unified visibility across all AI operations: LLM tracing, evaluation, and experiment tracking

MLflow is the largest open-source AI platform, with over 30 million monthly downloads. Backed by the Linux Foundation and licensed under Apache 2.0, it provides a complete AIOps stack with no vendor lock-in. Get started →

Open Source vs. Proprietary AIOps

When choosing an AIOps platform, the decision between open source and proprietary SaaS tools has significant long-term implications for your team, infrastructure, and data ownership.

Open Source (MLflow): With MLflow, you maintain complete control over your AIOps infrastructure and data. Deploy on your own infrastructure or use managed versions on Databricks, AWS, or other platforms. There are no per-seat fees, no usage limits, and no vendor lock-in. MLflow supports any AI framework, from scikit-learn and PyTorch to OpenAI and LangChain, under a single platform.

Proprietary SaaS Tools: Commercial AIOps platforms offer convenience but at the cost of flexibility and control. They typically charge per seat or per usage volume, which can become expensive at scale. Your data is sent to their servers, raising privacy and compliance concerns. Most proprietary tools specialize in either ML or LLM workloads, not both, leading to tool sprawl.

Why Teams Choose Open Source: Organizations building production AI applications increasingly choose MLflow because it offers production-ready AIOps for both ML and LLM workloads without giving up control of their data, cost predictability, or flexibility. The Apache 2.0 license and Linux Foundation backing ensure MLflow remains truly open and community-driven.